AMD Says Async Compute Support Give Radeon GPUs An Advantage Over Nvidia's Offerings In DX12 Gaming

AMD Radeon GPUs' support for asynchronous compute gives the company an edge during DirectX 12 gaming over rival's Nvidia offerings, AMD claims. In-game effects like shadowing, lighting, artificial intelligence, physics and lens effects often require multiple stages of computation before determining what is rendered onto the screen by a GPU’s graphics hardware.

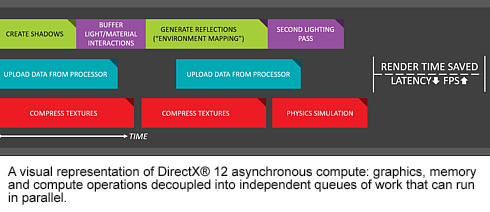

In the past, these steps had to happen sequentially. Step by step, the graphics card would follow the API’s process of rendering something from start to finish, and any delay in an early stage would send a ripple of delays through future stages. These delays in the pipeline represent a brief moment in time when some hardware in the GPU is paused to wait for instructions.

Of course, such "delays" happen all the time on every graphics card. No game can perfectly utilize all the performance or hardware a GPU has to offer.

According to Robert Hallock, the Head of Global Technical Marketing at AMD, Radeon GPUs make ther difference through the Graphics Core Next architecture’s ability to pull in useful compute work from the game engine to fill these "bubbles" - when some hardware in the GPU is paused to wait for instructions.

"If there’s a rendering bubble while rendering complex lighting, Radeon GPUs can fill in the blank with computing the behavior of AI instead. Radeon graphics cards don’t need to follow the step-by-step process of the past or its competitors, and can do this work together—or concurrently - to keep things moving," Hallock says.

Filling these bubbles improves GPU utilization, input latency, efficiency and performance for the user by minimizing or eliminating the ripple of delays. And AMD claims that only Radeon graphics currently support this crucial capability in DirectX 12 and VR. Last week "Ashes of the Singularity" was updated with support for DirectX 12 Asynchronous Compute. According to AMD's internal testing, a Radeon R9 Fury X GPU was far and away the fastest DirectX 12-ready GPU in that test. Moreover, powerful DirectX 12 performance pulled from the GCN architecture resulted to a performance tie up of a $400 Radeon R9 390X GPU with a $650 GeForce GTX 980 Ti.

We are eager to hear Nvidia's response on that.