Intel Unveils Strategy for Artificial Intelligence

Intel plans to reduce the time to train a deep learning model over the next three years compared to GPU solutions using Xeon, Phi processors along with FPGAs. The company will also deliver the new Intel Nervana platform along with developer tools built for cross-compatibility. According to Brian Krzanich, chief executive officer of Intel Corp., "Artificial intelligence (AI) is not only the next big wave in computing - it's the next major turning point in human history." Similar to how machine tools, factory systems and steam power ushered in the Industrial Revolution, changing every aspect of daily life in some way, Krzanich says that the Intelligence Revolution will be driven by data, neural networks and computing power.

AI will extend our human senses and capabilities to teach us new things, enrich how we interact with the world around us, and improve our decision-making. AI is changing our world for the better, and Intel plans to enable and accelerate AI technology.

The company offers a range of computer solutions in the data center from general purpose to targeted silicon, computer vision capabilities, memory and storage, extensible programmable assets, and communication technologies. Building upon these assets, Intel has added Saffron, Movidius and Nervana - a set of accelerants required for the growth and wide-spread adoption of AI.

The Saffron cognitive platform leverages associative and machine learning techniques for memory-based reasoning and transparent analysis of multi-sourced, sparse, dynamic data. This technology is also well-suited to small devices, making intelligent local analytics possible across IoT and helping advance collaborative AI.

Intel's pending acquisition of this computer vision powerhouse, Movidius, will give the company a well-rounded presence for technologies at the edge. Embedded computer vision is increasingly important and Intel has a complete solution, with Intel RealSense cameras seeing in 3D as the "eyes" of a device, Intel CPUs as the "brain," and Movidius Myriad 2 vision processors as the "visual cortex."

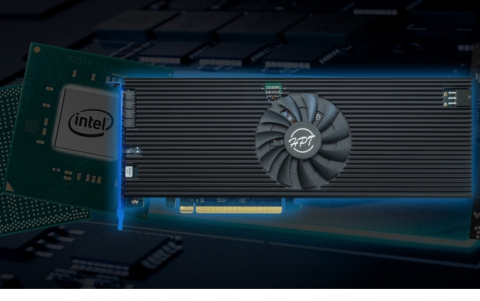

Intel has acquired Nervana Systems to accelerate training time, which is a critical phase of the AI development cycle - initially from days to hours and on the path from hours to minutes. The technology from Nervana will be optimized for neural networks to deliver the highest performance for deep learning, as well as compute density with high-bandwidth interconnect for model parallelism.

The Nervana technology will be integrated into Intel's product roadmap. The company will test first silicon (code-named "Lake Crest") in the first half of 2017 and will make it available later in the year. In addition, Intel announced a new product (code-named "Knights Crest") on the roadmap that integrates Intel Xeon processors with the technology from Nervana.

Intel expects Nervana?s technologies to produce a breakthrough 100-fold increase in performance in the next three years to train complex neural networks, enabling data scientists to solve their biggest AI challenges faster.

The age of AI is in its early days. Intel Xeon processors with GPUs are being used for deep learning, which is a rapidly growing, subset of machine learning. Some scientists have used GPGPUs because they happen to have parallel processing units for graphics, which are applied to deep learning. However, Krzanich believes that the GPGPU architecture "is not uniquely advantageous for AI," and as AI continues to evolve, both deep learning and machine learning "will need highly scalable architectures, " such as the Intel architecture.

Today, Intel powers 97 percent of data center servers running AI workloads. This includes Intel Xeon processors and Intel Xeon Phi processors to more workload-optimized accelerators, including FPGAs (field-programmable gate arrays) and the technology acquired from Nervana.

According to Diane Bryant, executive vice president and general manager of the Data Center Group at Intel, his company expects the next generation of Intel Xeon Phi processors (code-named "Knights Mill") will deliver up to 4x better performance than the previous generation for deep learning and will be available in 2017. In addition, Intel is shipping a preliminary version of the next generation of Intel Xeon processors (code-named "Skylake") to select cloud service providers. With AVX-512, an integrated acceleration advancement, these Intel Xeon processors will boost the performance of inference for machine learning workloads. Additional capabilities and configurations will be available when the platform family launches in mid-2017.

Intel will also work with Google to help enterprise IT deliver an open, flexible and secure multi-cloud infrastructure for their businesses. The collaboration includes technology integrations focused on Kubernetes (containers), machine learning, security and IoT.

To further AI research and strategy, Intel announced the formation of the Intel Nervana AI board. The company announced four founding members: Yoshua Bengio (University of Montreal), Bruno Olshausen (UC Berkeley), Jan Rabaey (UC Berkeley) and Ron Dror (Stanford University).

Additionally, Intel is working to make AI more accessible. To help accomplish this, Intel has introduced the Intel Nervana AI Academy for broad developer access to training and tools. Intel also introduced the Intel Nervana Graph Compiler to accelerate deep learning frameworks on Intel silicon.

In conjunction with the AI Academy, Intel is partnering with education provider Coursera to provide a series of AI online courses to the academic community. Intel also launched a Kaggle Competition (coming in January) jointly with Mobile ODT where the academic community can put their AI skills to the test to solve real-world socioeconomic problems, such as early detection for cervical cancer in developing countries through the use of AI for soft tissue imaging.