Google Lens Enhanced, Recognizes More than one Billion Products

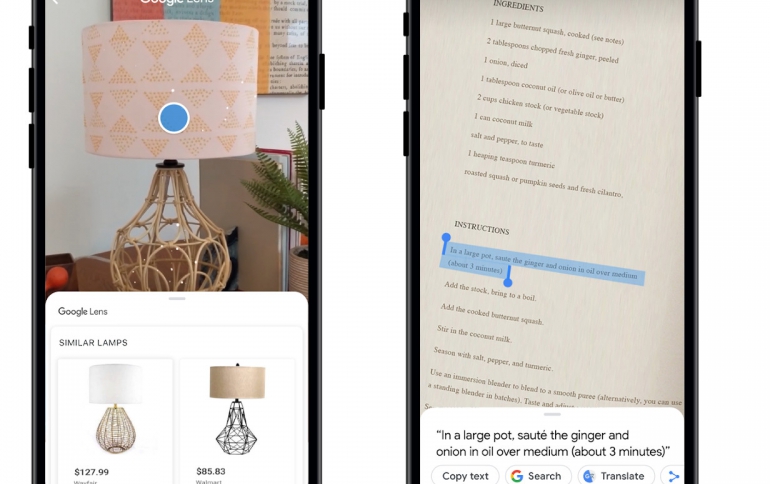

Google’s AI-powered camera tool Lens can now recognize over a billion items —four times the number it covered at launch, Google says.

Google Lens launched last year in a preliminary version on Photos and Assistant with only around 250,000 items within its repertoire. Last week, Google launched a redesigned Lens experience across Android and iOS, and brought it to iOS users via the Google app.

Lens take advantage of machine learning and computer vision. But a machine learning algorithm is only as good as the data that it learns from. That’s why Lens leverages the hundreds of millions of queries in Image Search for a specific item it "scans" along with the thousands of images that are returned for each one to provide the basis for training its algorithms.

Lens uses TensorFlow—Google’s open source machine learning framework—to connect images to the words that characterie those images. Finally, Lens connects those labels to Google's Knowledge Graph, with its tens of billions of facts on everything from pop stars to puppy breeds. This helps Lens understand that, for examplem a "Shiba Inu" is a breed of dog.

Oftentimes, what we see in our day-to-day lives looks fairly different than the images on the web used to train computer vision models. We point our cameras from different angles, at various locations, and under different types of lighting. And the subjects of these photos don’t always stay still. Google is starting to address this by training the algorithms with more pictures that look like they were taken with smartphone cameras.

Lens has also the ability to read and let you take action with the words you see. For example, you can point your phone at a business card and add it to your contacts, or copy ingredients from a recipe and paste them into your shopping list.

To teach Lens to read, Google developed an optical character recognition (OCR) engine and combined that with Lens' understanding of language from search and the Knowledge Graph. Google trains the machine learning algorithms using different characters, languages and fonts, drawing on sources like Google Books scans.